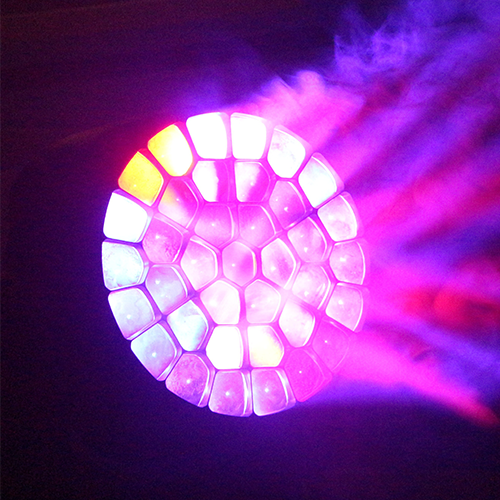

The Astro Spatial Sara II processor will create fully immersive 3D soundscapes

ELEVATE IMMERSION WITH REAL TIME TRACKING AND 3D SOUND

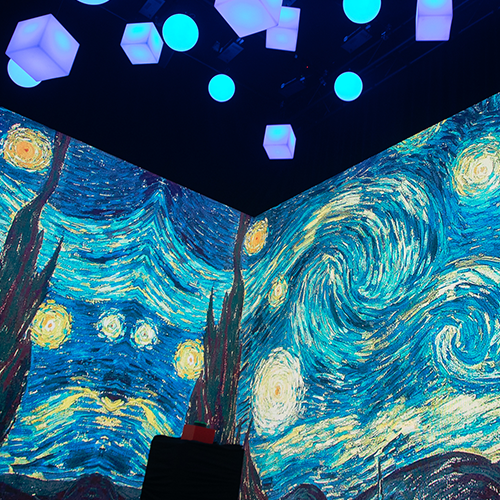

Creating immersive spaces at events can include various technologies and techniques. At ���۽���, we’re proud to introduce the BlackTrax system and the Astro Spatial Sara II processor. These technologies allow tracking presenters, objects in your content, and even attendees, creating memorable experiences that fully immerse those involved.

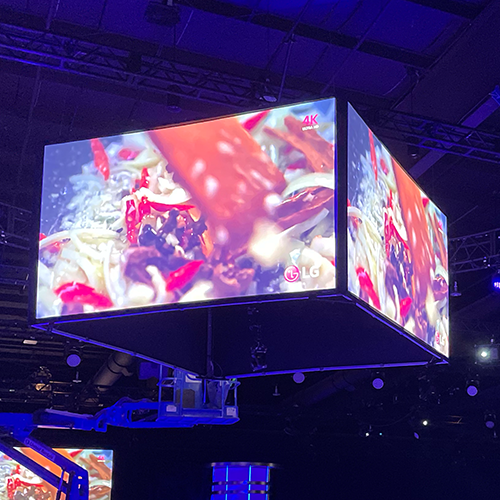

With BlackTrax, you can track people and objects at an event with many possibilities. This vision-based system uses infrared cameras to capture the 3D location of beacons worn by people or placed on objects. The location information is then sent to various connected third-party applications, such as robotic lights, audio processors, and media servers. When tracking presenters using robotic lights, BlackTrax can take control of the pan, tilt, and zoom of the moving lights in the space, following each presenter in real time. BlackTrax can also send its location data to media servers so the information can be used to generate effects at the exact location as the trackable. This same kind of tracking can be used with video, allowing the content to follow a presenter or attendee as they move around the event space.

For audio, we use the Astro Spatial Sara II processor to create an immersive, whole 3D audio experience. In this process, the location of each speaker in the room is laid out in Astro Spatial, and the trackable is placed into that 3D space in real-time with the location data provided by BlackTrax. Similar to the tracking available using moving lights, this makes it possible to control exactly where the audio is based while giving attendees an enhanced immersive experience and placing them at the heart of the performance.

Features of BlackTrax Include:

Infrared LED beacons with unique light pulses for tracking

Up to 85 beacons or 255 tracking points simultaneously

Sensor Lens can be secured onto truss, walls, or other stationary objects in proximity to space

Setup is as easy as hanging the Sensor Lens around the space, then calibration can be completed in 5 to 10 minutes

Features of Astro Spatial Sara II processor include:

Minimum of 32 configurable audio objects

Up to 128 output channels

Dante™ or Madi I/O

A range of redundancy options

Web-browser based control

Uses for real-time tracking and 3D sound can include:

Track presenters on stage and throughout the room with lights, audio, and/or video

Create immersive, full 3D audio experiences

Track attendees with video content that follows them